ML Inference Latency and Cost Evaluation Platform

An internal ML platform for benchmarking latency, throughput, GPU utilization, and cost per request so teams could ship models with consistent release criteria.

One-liner: In eight weeks, this internal platform turned model serving from a black box into a measurable operating system, cutting cost per request by 43% while stabilizing p99 latency.

Executive summary

Before this platform existed, the company had multiple teams shipping inference services with inconsistent deployment patterns and almost no shared measurement discipline. Some services ran through TorchServe, some through ONNX Runtime, and others through KServe-based paths. GPU utilization collapsed at night, latency spiked during peak windows, and nobody could answer a simple executive question: what does one production inference request actually cost?

This project created a single evaluation layer for production inference. It combined online metrics, cost attribution, and model profiling so that every deployed model could be evaluated against the same operational standard. That changed the conversation from subjective performance debates to model-level release decisions backed by latency, throughput, GPU utilization, and cost per request.

| Metric | Before | After | Why it mattered |

|---|---|---|---|

| Cost per request | baseline | -43% | GPU class changes and serving optimization materially reduced unit economics |

| GPU utilization | 36% | 54% | Idle capacity was converted into usable production throughput |

| p99 latency | unstable at 400-900 ms | stable around 420 ms | The platform made tail latency visible and governable |

| Alerting on SLA / cost drift | fragmented | standardized | Incident response and rollback became much faster |

The closest related material on this site is Production ML Release Gates and the MLOps and Reliability topic page. This case study is what those principles look like when applied to model serving economics.

Why the business needed this platform

The business issue was not abstract platform hygiene. It was operational waste.

- Teams were deploying models with different serving stacks and different metrics.

- GPU resources were underutilized during low traffic and overloaded during peaks.

- Finance could see cloud and infrastructure spend, but not cost at the model-request level.

- Infra teams were manually constraining nodes to stay within budget, sometimes at the expense of production stability.

That combination creates a predictable failure mode: nobody can compare serving strategies honestly, so optimization becomes anecdotal. The platform existed to replace anecdotes with evidence.

What the platform actually did

The platform measured and exposed four production signals together:

- latency percentiles such as p50, p90, and p99

- throughput under real load

- GPU and CPU utilization

- cost per request at the model and namespace level

That seems simple, but the value came from making these signals comparable across teams and serving frameworks. Once the system was live, teams could answer questions like:

- Should this model stay on V100 or move to T4?

- Is batching helping throughput or just hiding tail latency?

- Is a quantized model actually cheaper after accounting for throughput and idle time?

- Which service is burning budget without improving user-facing value?

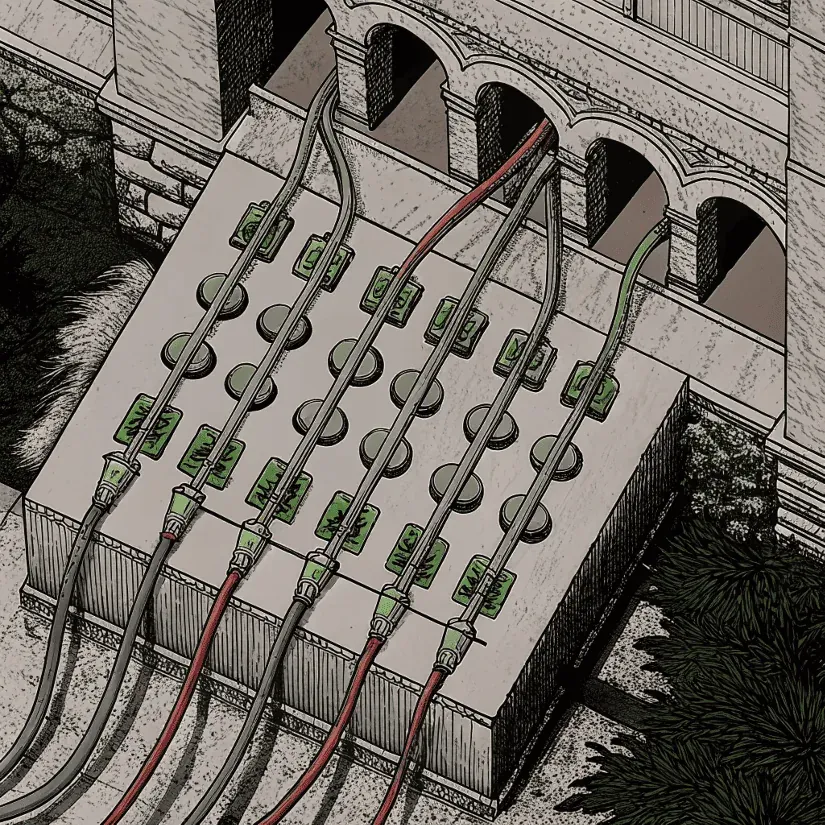

Architecture

Evaluation pipeline linking serving telemetry, profiling, cost attribution, and model-level release decisions.

The implementation sat on top of the existing Kubernetes environment and treated inference services as measurable units rather than opaque applications.

Metrics path

Each inference service exposed a Prometheus-compatible metrics endpoint with:

- latency histograms

- request throughput

- GPU utilization

- CPU utilization

This created a shared measurement surface whether the underlying service was PyTorch-first, ONNX-first, or routed through Triton.

Profiling path

The platform also supported active profiling:

- PyTorch Profiler for deep latency and resource diagnostics

- ONNX Runtime tracing exported to JSON and visualized in Perfetto

- manual and threshold-based review of hot paths after retraining or performance regressions

The point was not to run profilers all the time in production. The point was to have a standard path for proving where time and money were being spent.

Cost attribution

Kubecost plus spot-instance price mapping provided billing attribution down to the model-serving layer. This is what allowed engineering and finance to speak about the same system with the same vocabulary. Without cost attribution, performance optimization almost always overfits to latency alone.

Release discipline and operational value

This platform worked because it did not stop at dashboards. It informed rollout decisions.

Governance ladder from profiling and comparison through pricing, decision, and promotion.

A healthy model version now moved through a consistent ladder:

- profile the candidate

- compare latency, throughput, and utilization against baseline

- calculate cost per request under the target infrastructure class

- promote only if the model improved the business trade-off instead of just one technical metric

This is the operational layer that many ML teams skip. They test accuracy, maybe they test average latency, and they never build a system that connects model behavior to production budget. This platform fixed that gap.

What the profiling revealed

The most useful findings were not exotic. They were concrete and expensive.

One example: CPU-side transforms in the image-to-tensor-to-device path were taking up to 40% of inference time on one critical workload. The profiler made that visible quickly. Another example: a GPU class change from V100 to T4 looked risky in abstract, but the platform showed that the move cut cost per request substantially while preserving the required latency envelope for the actual production profile.

That is why the project mattered. It did not just say “this service is slow.” It said where it was slow, why it was slow, and whether fixing it was financially worth doing.

Results after rollout

The post-launch impact was straightforward and material.

| Signal | Before | After | Interpretation |

|---|---|---|---|

| p99 latency | 400-900 ms swings | stable around 420 ms | Tail behavior became predictable enough to manage under SLA |

| GPU utilization | about 36% | 54% | The same infrastructure delivered more useful work |

| Cost per request on LLM path | baseline | -43% | Infrastructure and serving changes paid for themselves |

| Cost / SLA alerts | inconsistent | automatic | Failures became operationally visible in seconds |

One concrete incident made the platform’s value obvious. A spot instance failed overnight and the serving path started showing a threefold cost spike. The alert fired immediately, the team rolled the configuration back quickly, and the financial damage stayed bounded. Without the platform, that issue would likely have been discovered later through cloud spend, not through the serving control loop itself.

Why this mattered to leadership

For engineering leaders, the platform created a reliable basis for rollout decisions. For finance leadership, it created real-time visibility into inference economics. That combination is rare and extremely valuable.

Instead of asking for more capacity because “latency looks risky,” teams could now say:

- this model costs X per million requests

- this infrastructure class yields Y p99 latency

- this serving configuration pushes utilization from A to B

- this release is within or outside the acceptable production envelope

That is what turns a platform into governance infrastructure instead of internal tooling.

My role

I designed and implemented the measurement and optimization layer across the serving path:

- defined the shared metric model across teams and frameworks

- integrated PyTorch and ONNX profiling workflows

- built the Prometheus plus Kubecost measurement path for latency, throughput, utilization, and cost per request

- turned profiler output into concrete infra and batching recommendations

- helped move rollout decisions from opinion to measurable release criteria

The technical work mattered, but the bigger win was operational alignment. The platform made different teams comparable.

What this case proves

This case proves that inference cost control is not a finance-only problem and latency control is not an infra-only problem. Both become manageable when the platform measures them together at the model level.

It also proves something more practical: a benchmarking and observability layer can create immediate business value even before more ambitious platform work ships. By the end of this project, the company had a shared view of what “healthy inference” actually meant.

Bottom line

This was an internal ML platform project, but the outcome was directly commercial. The company reduced serving waste, stabilized tail latency, and built a release process that tied model behavior to real operating economics. That is what mature ML infrastructure is supposed to do: make production decisions safer, faster, and less political.

FAQ

What problem did this ML cost platform solve first?

It gave engineering and finance a shared view of model performance and unit economics, which made inference rollout decisions measurable instead of political.

Why was cost per request the key metric?

Latency and throughput by themselves can hide inefficient serving. Cost per request exposed the real production trade-off between model quality, infrastructure choice, and operational budget.

Was this only a dashboarding project?

No. The platform combined profiling, cost attribution, alerting, and release criteria so teams could actively change infrastructure and model configurations, not just observe them.