RAG Assistant for Catalog

Production RAG catalog assistant with hybrid retrieval, reranking, and cost-aware serving that cut zero-result searches and improved CTR inside a one-GPU operating envelope.

One-liner: In six weeks, this RAG catalog assistant cut zero-result searches from 30% to 9%, improved CTR by 1.6 percentage points, and held the production path inside a one-GPU budget.

Executive summary

The client was a U.S. retailer with roughly 9 million products and a search experience that failed too often on natural-language queries. About 30% of catalog searches ended in an empty result page. When the system did respond, p90 latency was about 1.6 seconds, which was slow enough to hurt sessions and conversion. On top of that, the team was burning about $45,000 per month on a cloud LLM setup that had not yet produced a durable production architecture.

The mandate was practical: build a retrieval-first catalog assistant that could improve discovery without turning search into an unbounded chat demo. The resulting system combined semantic retrieval, lexical fallback, structured filters, reranking, and a fine-tuned Mistral-7B response layer. It reached the business target in one quarter and created a path toward broader search modernization without exploding infrastructure cost.

| Metric | Before | After | Why it moved |

|---|---|---|---|

| Zero-result rate | 30% | 9% | Hybrid retrieval plus BM25 fallback recovered queries that previously died on lexical mismatch |

| p90 latency | 1.6 s | 0.8 s | Quantized serving, controlled batching, and a retrieval-first path reduced response time |

| CTR | 12.4% | 14.0% | Better candidate quality and reranking improved what users saw first |

| Cost per 1,000 queries | $2.2 | $1.2 | Self-hosted inference and budget-aware serving cut the cloud premium |

| GMV on 10% A/B slice | baseline | +6.3% | Search quality improvements propagated to commercial outcome |

For the broader design philosophy behind this kind of retrieval-first system, the closest companion on the site is Agentic Search in Production. For the operational side, the rollout discipline matches the production patterns described in MLOps for a Support RAG Agent in 2026.

Why the client needed an MVP fast

This was not a greenfield AI initiative. It was a rescue project for a search surface that was already leaking value.

- The catalog had grown large enough that lexical search alone no longer covered the real query distribution.

- Shoppers were increasingly typing natural-language questions instead of short keyword strings.

- Empty-result pages were suppressing sessions that should have converted.

- The existing cloud LLM setup was expensive but still failed to behave like a disciplined retrieval system.

The business goal was not to “add chat.” It was to prove, within one quarter, that a production RAG layer could reduce empty searches, improve click behavior, and do so under a hard operating-cost ceiling. That framing matters because it changes the architecture. The LLM becomes a bounded interpretation and response layer on top of retrieval, not the authority that decides what exists in the catalog.

Success criteria

The MVP had five targets from the start:

| Goal | Target | Result |

|---|---|---|

| Zero-result rate | below 10% | 9% |

| p90 latency | below 1 second | 0.8 s |

| Cost reduction | at least 30% | 45% |

| CTR lift | measurable positive delta | +1.6 pp |

| Production reliability | SLA 99.5% | 99.6% |

These targets forced a very specific operating model. Retrieval had to do most of the heavy lifting. The reranker had to improve candidate order without destabilizing latency. The generative layer had to stay within a tight runtime budget. That is exactly the kind of constraint set that separates an actual product case study from an AI-flavored prototype.

System architecture

The system was built as a retrieval-first catalog assistant rather than a free-form conversational app.

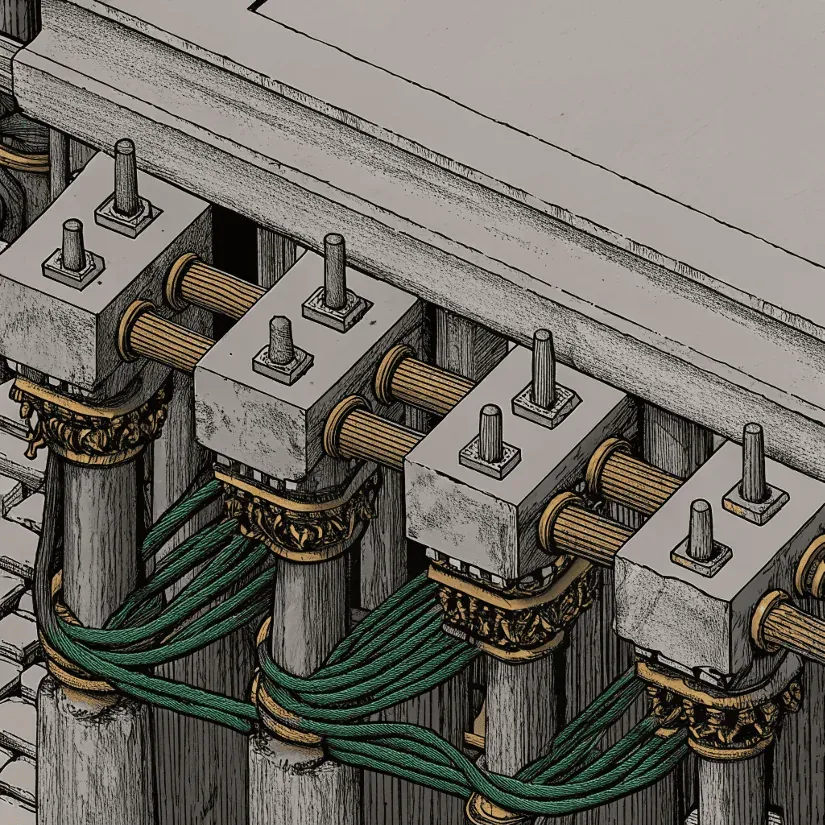

Query path for hybrid retrieval, fallback, reranking, and grounded answer generation.

1. Ingestion and indexing

Catalog records were chunked by product content and operational attributes through an Airflow pipeline. Embeddings were generated with an E5-small family encoder and written into a three-shard Weaviate cluster using HNSW. A full reindex of roughly 10 million records could complete in about 45 minutes without taking the system offline.

This mattered because the assistant needed fresher coverage than a traditional nightly batch search stack. If indexing lags, the generative layer becomes a liability: it speaks fluently about stale evidence.

2. Retrieval

The retrieval path was intentionally hybrid.

- Semantic retrieval handled natural-language intent and loose wording.

- BM25 fallback protected exact brand, SKU, and attribute lookup cases.

- Structured filters applied price and brand constraints where they were extractable.

- A cross-encoder reranker compressed the candidate set to the most commercially and semantically useful results.

That combination is why the system could reduce zero-result pages without handing control to the LLM. On this site, the same principle appears again in the Search topic cluster: retrieval and ranking remain the contract, while language models extend interpretation and explanation.

3. Response layer

Ray Serve hosted an int8 Mistral-7B model in a tightly controlled serving path. First token streaming started at about 250 ms on the healthy path. The model had been fine-tuned on about 50,000 e-commerce dialogs, which improved response behavior, but the real production win came from discipline around when the model was allowed to answer.

It was not allowed to invent a catalog view outside the retrieved evidence set. The generation layer summarized, clarified, and packaged results. It did not replace search.

Serving architecture built around a one-GPU operating envelope, rollout control, and observable runtime behavior.

Critical engineering decisions

Retrieval before generation

The easiest way to ship a more impressive demo would have been to let the model “reason” more and search less. That would have been the wrong production choice. The final design kept the search stack authoritative and used the LLM as a bounded layer on top.

Reranking over bigger generation

A cross-encoder reranker improved the top of the result set more reliably than spending more tokens in the response stage. This was the same kind of trade-off discussed in The Offline-to-Online Gap in Deep Learning Recommender Systems: better ranking control tends to survive production better than more sophisticated narration.

Cost-aware serving

The operating envelope was real. The GPU budget was not a guideline. It was a hard limit. The team therefore used quantization, batching discipline, and cautious warm-path behavior to preserve latency without moving back to a costly cloud-heavy approach.

Guardrails against hallucination

The system used a simple but effective confidence policy:

- no response without retrieved evidence

- fallback to lexical retrieval when semantic coverage was weak

- suppression of low-confidence generative claims

- rollback-ready serving via canary controls

Customer complaints related to hallucinated answers stayed below 1%.

Rollout and observability

The serving layer used a FastAPI BFF, gRPC communication into Ray Serve, and KServe autoscaling with Argo Rollouts for progressive deployment. The rollout pattern was roughly 50/50 canary during evaluation, with rollback below one minute when the live path deviated from budget or relevance expectations.

The observability stack included:

- Prometheus and Grafana for latency, throughput, and token cost

- Loki for logs

- Evidently drift checks on live query behavior

- production alerts around latency and degraded coverage

One of the first post-launch issues was a spike in latency caused by overly aggressive batching that overheated the GPU path. The fix was operational rather than theoretical: throttle concurrency, add warm-up behavior, and keep the cost discipline intact. That is a useful pattern in its own right. Production systems usually fail first at the runtime boundary, not in the slide deck.

Business impact and economics

The commercial value of the system came from recovering previously lost search sessions and making those sessions more actionable.

- Zero-result reduction from 30% to 9% created about 240,000 recovered sessions per month.

- CTR improved by 1.6 percentage points.

- GMV lifted by 6.3% on the A/B-tested traffic slice.

- Cost per 1,000 queries dropped from $2.2 to $1.2.

- GPU CAPEX paid back in about 70 days.

The important point is not just that cost went down. It is that cost went down while the system became more useful. Cheap retrieval that does not help the business is trivia. Expensive retrieval that does not survive production is theater. This case landed in the narrow band where search quality, user behavior, and unit economics all moved in the same direction.

My role

I owned the core ML and productionization decisions on the retrieval and serving path:

- tuned Weaviate indexing and HNSW parameters

- prepared the dataset and fine-tuned Mistral-7B with LoRA in an int8 deployment path

- implemented the MiniLM-based reranking layer and prompt templates

- wired metrics into Prometheus and drift signals into Evidently

- analyzed the A/B results and turned them into a client-ready operating and ROI readout

This was not just model work. It was architecture, operating discipline, and measurement.

What this case proves

This project proved that a catalog assistant can be both commercially useful and operationally disciplined if retrieval remains primary.

It also created a credible next phase:

- personalization features based on purchase history

- upsell and cross-sell logic on top of the same retrieval core

- mobile SDK support

- reuse of the Helm-based deployment package for a second pilot environment

That is the right shape of a production AI case. The MVP is not an endpoint. It is a pressure-tested first operating model.

Bottom line

This RAG assistant did three things that matter in production: it recovered coverage, improved the top of the result set, and made the economics viable. The final system was not “agentic” in the loose demo sense. It was a bounded retrieval product with measurable gains in search quality, CTR, GMV, and operating cost.

For a CTO or Head of AI, that is the real signal: the architecture improved the business without giving up control.

FAQ

What made this RAG assistant production-ready instead of demo-ready?

The system shipped with clear retrieval fallback paths, reranking, observability, and rollout controls from day one. It was designed to protect search quality and operating cost at the same time.

Why did hybrid retrieval matter in this case?

Semantic retrieval improved recall on messy natural-language catalog questions, while BM25 fallback and structured filters preserved coverage on exact brand, price, and attribute constraints.

How did the project stay within a strict cost envelope?

The serving stack was tuned around a single-GPU operating target, with model quantization, batching controls, and selective use of the generative layer instead of treating the LLM as the primary search engine.