Training a Hybrid LLM and Recommender System with Semantic IDs

TL;DR: I trained Qwen3-8B to work with semantic IDs instead of opaque hash IDs. The model became effectively bilingual: it understands natural language and product identifiers. Result: recommendation can be steered through chat, with explanations and controlled output.

Semantic IDs are compelling because they replace opaque IDs with tokens that carry structure the model can learn. Instead of random hashes for products, you get token sequences that preserve neighborhood information. The core question was simple: can we train a hybrid LLM + recommender on behavioral data and keep recommendation quality while gaining controllability?

The answer was yes. The final model works as one system, not as an LLM wrapped in retrieval glue. Semantic IDs are part of its vocabulary, so it can consume interaction history, generate candidate IDs, and explain recommendations in one pass.

Code for data prep, model training, and chat inference. Note: this is a compact setup with lightweight fine-tuning, so prompt quality still matters.

Why this approach matters

This approach combines strengths from both systems:

- LLMs are flexible and good at language, but weak on catalog-specific behavior.

- Recommenders are strong on behavior prediction, but hard to control through natural language.

The pipeline is straightforward. First, extend the tokenizer with semantic ID tokens such as <|sid_0|>, <|sid_1|>, <|sid_2|>. Then continue pretraining so the model learns the mapping between catalog content and those IDs. Finally, train on user behavior sequences.

This hybrid is not guaranteed to beat a dedicated multi-stage recommender on raw top-K metrics. Its value is different: controllability and reasoning in the same interface used for search and recommendation.

User: I like animal and cute games.

<|rec|>The model interprets the natural-language intent (“animals and cute”) and generates semantic IDs for relevant games.

<|sid_start|><|sid_173|><|sid_324|><|sid_764|><|sid_768|><|sid_end|>, <|sid_start|><|sid_201|><|sid_397|><|sid_701|><|sid_768|><|sid_end|>, <|sid_start|><|sid_173|><|sid_305|><|sid_670|><|sid_768|><|sid_end|>Assistant: “Animal Crossing: New Leaf”, “DISNEY INFINITY Starter Pack 3DS”, “Nintendogs + Cats: Golden Retriever and New Friends”

Data and models

Preparing training data with product metadata and user sequences

We use data from the Video Games category of the dataset Amazon Reviews 2023 (Hou et al., 2024). The data contains rich product metadata and we can create sequences of user interactions.

The dataset contains 137k products with fields such as title, description, features, category, seller data, ratings, and price. After filtering to items with title length > 20 and description length > 100, 66k products remained. Behavioral logs contained 737k records. From that, I built 79k user purchase sequences with at least three valid products each. Mean sequence length was 6.5 items.

I also evaluated Amazon KDD Cup 2023 data (Amazon, 2023). It includes ~500k products, but multilingual processing increased complexity and missing product-category fields reduced utility for this setup. I chose Amazon Reviews to reduce implementation overhead and compute cost.

Semantic IDs from RQ-VAEs

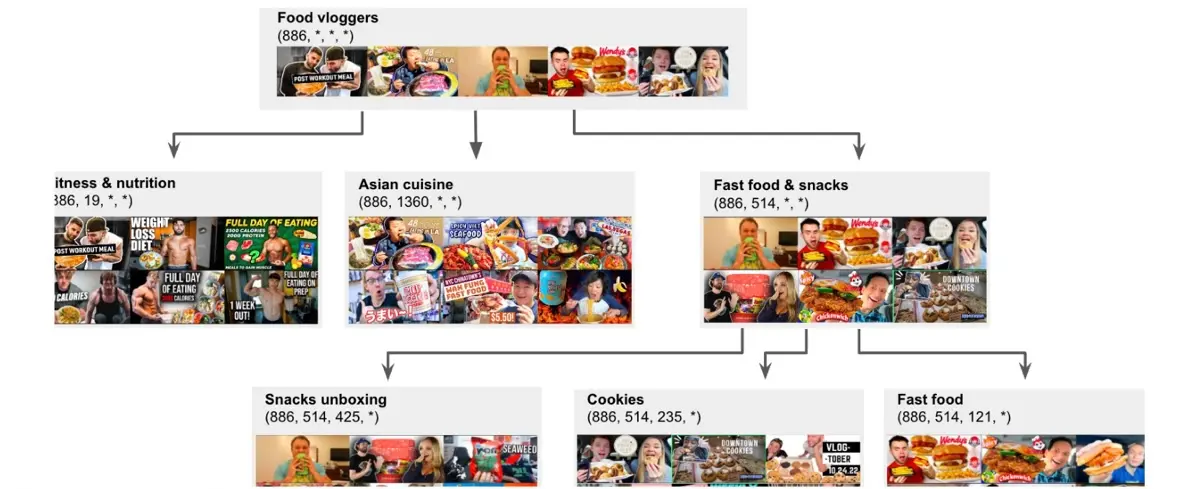

Semantic IDs (Rajput et al., 2023; Singh et al., 2023) are hierarchical representations that encode products into a sequence of tokens, replacing embeddings or IDs based on hashes. Unlike the regular product ID (B0040JHNQG), which has no meaning, the semantic ID (<|sid_0|><|sid_256|><|sid_512|><|sid_768|>) encodes information about the product. As a result of learning, similar products naturally share common prefixes, forming a tree structure, where each ID level represents increasingly detailed information about the product.

Hierarchical structure of semantic IDs for food videos (source)

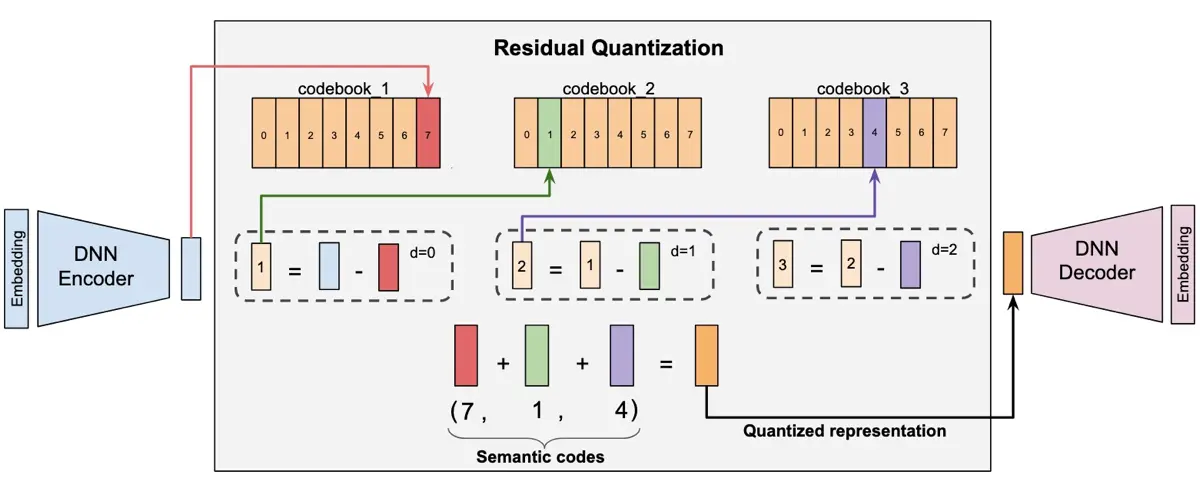

Residual Quantized Variational Autoencoders (RQ-VAEs; Zeghidour et al., 2021, Lee et al., 2022) convert continuous embeddings into discrete semantic IDs. We first encode product metadata (for example, title and description) into embeddings. RQ-VAE then applies hierarchical quantization to produce token sequences.

How RQVAEs convert embeddings into semantic IDs (source)

This is an iterative process. At the first level, the model finds the closest vector in the first codebook to the input embedding; this vector becomes the first Semantic ID token. The model calculates the quantization error, or residual, by subtracting the selected codebook vector from the input embedding. At the second level, it finds the closest vector in the second codebook to this remainder, which produces the second token. The process is repeated for each level, each step capturing finer details that previous levels missed.

The RQ-VAE loss function is worth discussing because understanding it is key to generating high-quality semantic IDs. The general loss function has two main components:

The first component, reconstruction loss, ensures that the decoder can accurately reconstruct the original product embedding () from the final quantized representation (). This is the standard square error:

The second component, quantization loss, measures how well the codebook vectors match the residuals generated by the encoder. It contains two terms:

The first term (), codebook loss, is responsible for training the codebook embeddings. It measures the distance between the encoder residual () and the selected codebook vector (). Stop-gradient is applied to the encoder output () to treat the residuals as a fixed target. Thus, the gradients only go to the codebook vector, bringing it closer to the encoder output.

The second term (), commitment loss, is responsible for training the encoder. It measures the same distance, but stop-gradient is applied to the codebook vector (). This prevents codebook updates and pushes the encoder to produce outputs, or commit, to vectors that already exist in the codebook. The hyperparameter controls the strength of this commitment penalty.

# PyTorch loss function (without recursive loop)

reconstruction_loss = F.mse_loss(x, x_reconstructed)

codebook_loss = F.mse_loss(residual.detach(), codebook_vector)

commitment_loss = F.mse_loss(residual, codebook_vector.detach())

quantization_loss = codebook_loss + commitment_weight * commitment_loss

total_loss = reconstruction_loss + quantization_lossThrough this process, RQ-VAE produces a semantic ID as a sequence of tokens, one from each quantization level. Because similar products share common prefixes, language models can better understand the relationships between products, which is also useful for tree search.

# How to hierarchically encode embeddings into semantic IDs

def encode_to_semantic_ids(self, x: Tensor) -> Tensor:

with torch.no_grad():

residual = self.encode(x)

indices_list = []

for vq_layer in self.vq_layers:

vq_output = vq_layer(residual)

indices_list.append(vq_output.indices)

residual = residual - vq_output.quantized

return torch.stack(indices_list, dim=-1)However, the practical problem is that this does not guarantee a unique ID for each product. In my experiments with a three-level code dictionary, where each level has 256 codes, we saw collisions on ~10% of the 66k products. To solve this, I added a fourth layer where I assign a sequentially increasing token to each ID to ensure that each product is uniquely identified.

SASRec, Qwen3-Embedding-0.6B, and Qwen3-8B

In addition to RQ-VAE, this setup uses three models. First, SASRec is trained on semantic IDs to validate representation quality against a baseline trained on regular IDs. Second, Qwen3-Embedding-0.6B generates product embeddings. Third, Qwen3-8B is tuned to understand and recommend products via semantic IDs.

SASRec (Kang & McAuley, 2018) is a sequential recommender inspired by the Transformer architecture. It encodes a user’s interaction history and uses a self-attention mechanism to weigh the most relevant past products to predict the next one. This allows the model to learn long-term dependencies in user behavior and outperform older recurrent models like RNN and GRU, while being more efficient due to parallelizability.

Qwen3-Embedding-0.6B (Zhang et al., 2025) is part of a series of embedding models available in sizes 0.6B, 4B and 8B. They are trained through a multi-step process that includes pre-training on synthetic data, then supervised fine-tuning and model fusion for robustness. The 8B achieves SOTA performance on the MTEB Multilingual benchmark.

Qwen3-8B (Yang et al., 2025) is a dense language model from the Qwen3 family. Despite being one of the smaller models, its post-training is optimized through strong-to-weak distillation on the Qwen3-235B-A22B and Qwen3-32B. This makes the Qwen3-8B relatively capable for its size, outperforming larger previous-generation models like the Qwen2.5-14B in more than half of the benchmarks evaluated, especially STEM and coding. Like other models in the Qwen3 series, Qwen3-8B has dual thinking and non-thinking modes.

Cleaning data and creating user sequences

First, we prepare product metadata to provide quality inputs to the semantic ID model. We start by excluding products with names shorter than 20 characters or descriptions shorter than 100 characters. This cut the number of products in half, from 137k to 66k unique products.

We then clean up the product descriptions using Gemini 2.5 Flash Lite (Comanici et al., 2025), fixing cut-off sentences, removing HTML, and reducing verbosity. This cut the average description length in half, from 1,038 to 538 characters. Similarly, we remove advertising text and standardize title formatting, turning verbose listings like “NEW! LIMITED! Sega Saturn RGB SCART LEAD CABLE…” into pure “Sega Saturn RGB SCART Cable”.

Then we enrich the data by extracting structured metadata, such as product type (Game, Hardware, Accessory), platform (PS4, Xbox, Wii), genre (Roguelike, Soulslike, Metroidvania), hardware type, brand, multiplayer modes, etc. This process had 98% coverage for platform information, 78% for brand identification, and 51% for genre classification.

Finally, for user sequences, we deduplicate by user and build interaction histories, yielding 91.5k sequences. We remove items without metadata and filter out sequences shorter than three products. We also cap sequence length at 100 products (only 28 sequences were trimmed). Final dataset size is 78.6k sequences, with median length 5 and mean length 6.5.

Training RQ-VAE to generate Semantic IDs

For product embeddings, I use Qwen3-Embedding-0.6B. The model supports task-specific instructions, so each input uses this prefix: “Given a product description, generate a semantic embedding that captures key features and characteristics.” Output embeddings are 1024-dimensional from last-token pooling, followed by L2 normalization.

RQ-VAE uses an encoder, three quantization levels with 256 codes each, and a symmetric decoder. For training stability, I use the rotation trick (Fifty et al., 2025) instead of the straight-through estimator for the gradient. Additional optimizations include k-means codebook initialization, resetting unused codes, and larger batch sizes. I also tested techniques that did not help, including EMA codebook updates and stopping gradients to the decoder.

The trained RQ-VAE achieved 89% unique semantic IDs on 66k products at three quantization levels. To resolve the remaining collisions, I added a fourth token that assigns a unique sequential ID (0, 1, 2, …) to any products that share the same first three codes. This ensures that each product has a unique 4-part semantic ID.

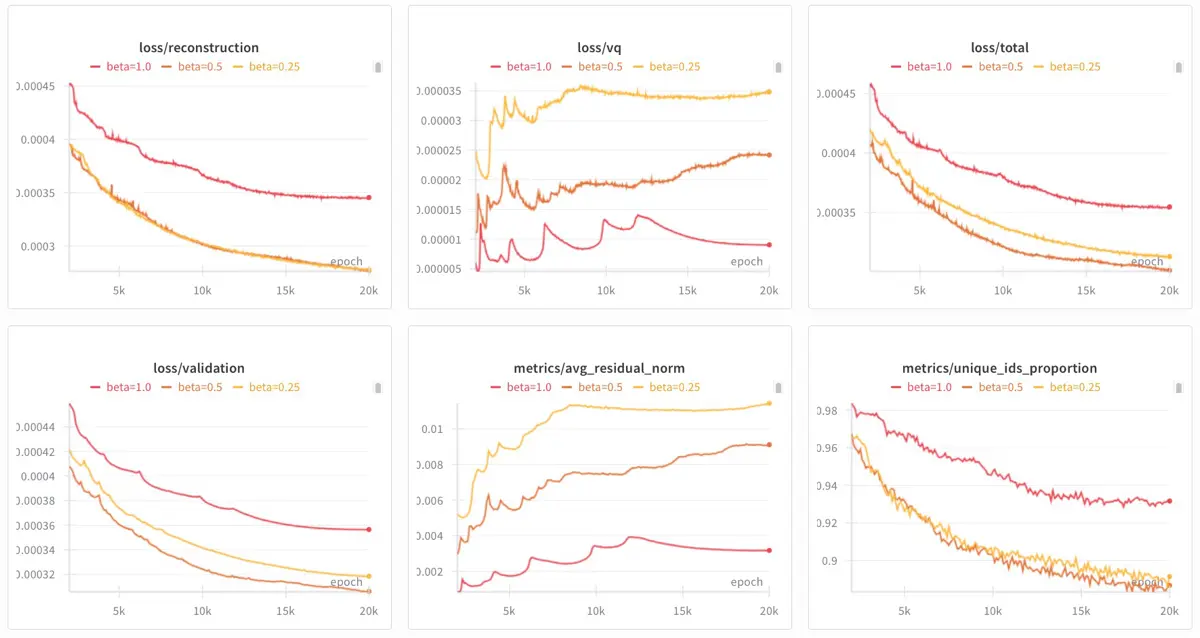

I ran dozens of experiments to tune RQ-VAE and improve semantic-ID quality. Key results:

I first tuned commitment weight , which balances reconstruction quality and codebook commitment. I tested 0.25 (yellow), 0.5 (orange), and 1.0 (red). Higher produced more unique IDs but worse validation loss. Lower improved uniqueness slightly but was less stable. On this dataset, gave the best loss-quality tradeoff, so later runs used . (This differs from earlier Semantic ID papers that used .)

Curves with beta = 0.25 (yellow), 0.5 (orange) and 1.0 (red)

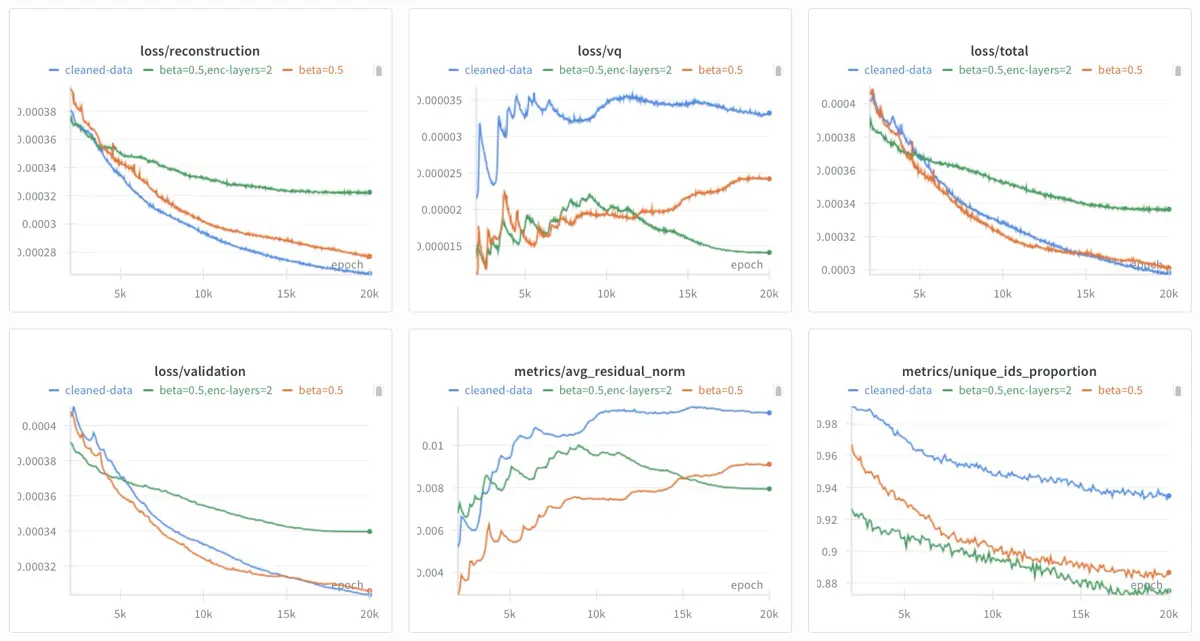

I also tested a smaller encoder and metadata cleaning. The smaller encoder (green) performed worse, with higher validation loss and fewer unique IDs. Data cleaning (blue) improved both reconstruction and validation loss and increased the fraction of unique IDs. I used this run for the final RQ-VAE.

Curves with beta = 0.5 (orange), smaller encoder (green) and cleaned data (blue)

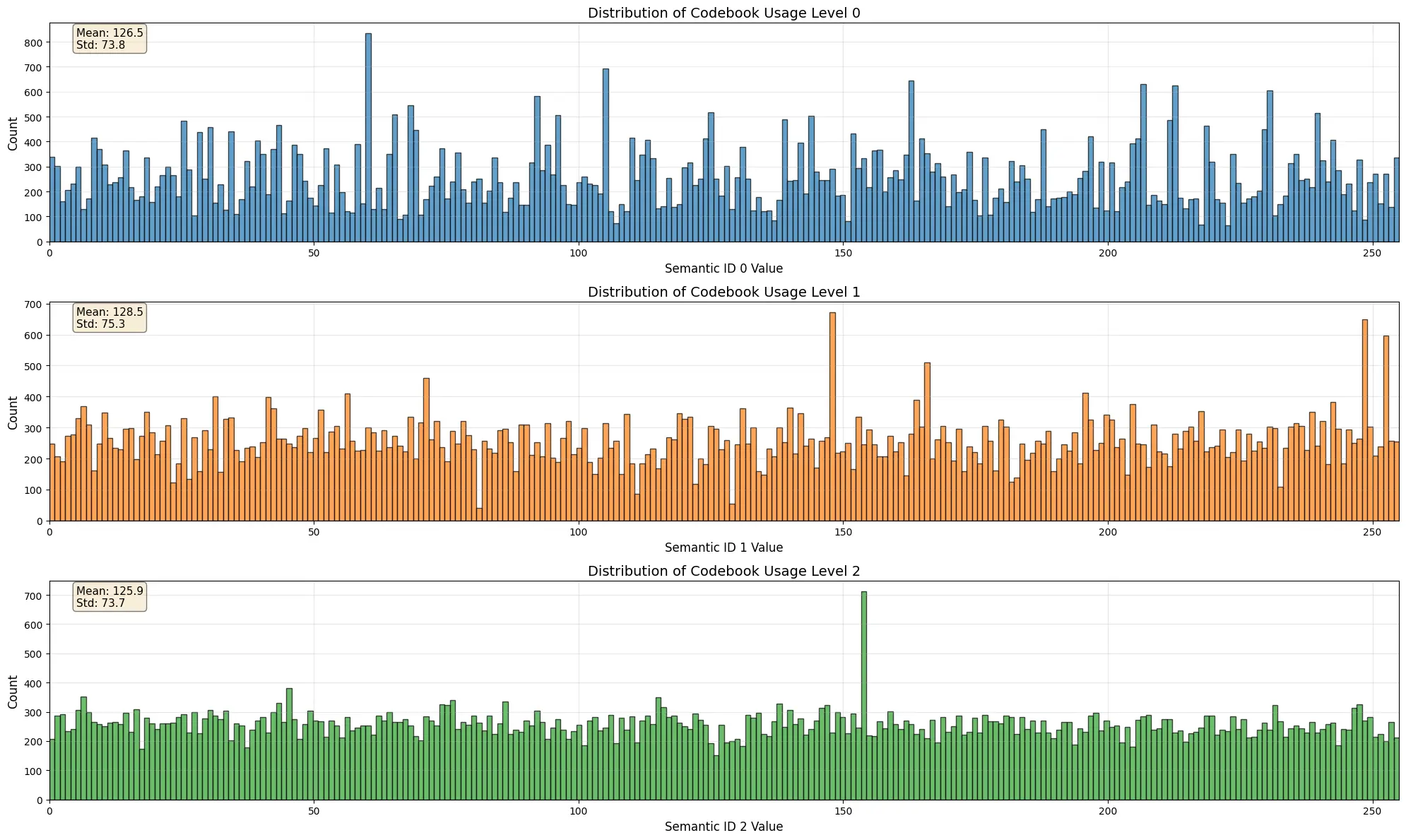

Another way to evaluate RQ-VAEs is to check the use of a codebook. Relatively even usage across all codes suggests that the model is using its full expressive capacity. The final RQ-VAE demonstrates this well; across all three quantization levels, usage is evenly distributed with low variance.

RQVAE example with well-distributed codebook usage

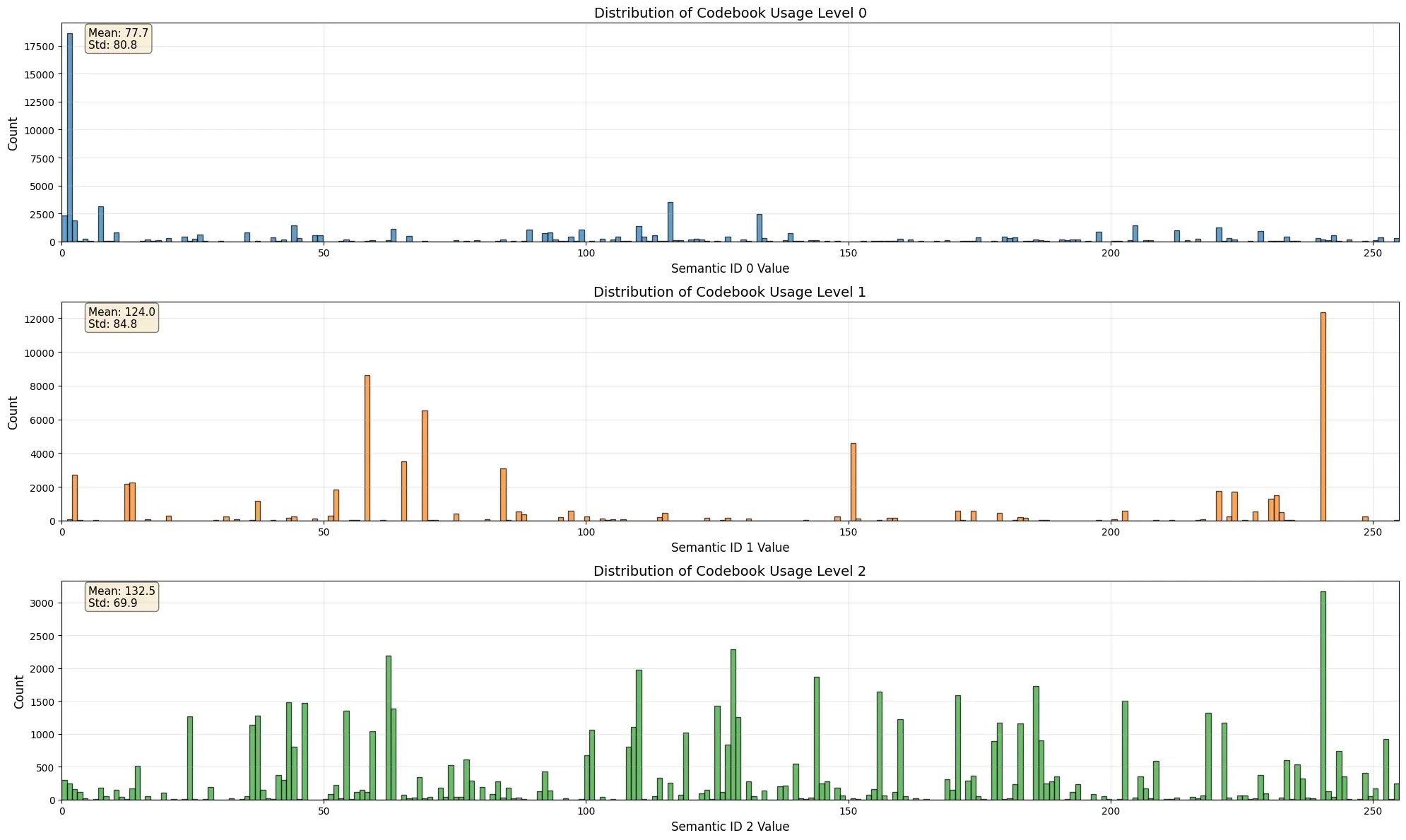

In contrast, a poorly converging RQ-VAE will have sparse and highly concentrated code usage. The histogram below shows this failure mode, where a few codes are reused and the majority of the codebook is ignored.

RQVAE example with poorly distributed codebook usage

With the trained RQ-VAE, all product embeddings are encoded into semantic IDs, for example <|sid_start|><|sid_191|><|sid_260|><|sid_716|><|sid_768|><|sid_end|>. I then converted 78.6k user purchase sequences from regular IDs to semantic-ID sequences. These sequences are used for both SASRec validation and additional Qwen3-8B training.

Training SASRec on regular product IDs vs. semantic IDs

To validate that our semantic IDs capture meaningful relationships between products, we train two variants of SASRec: a baseline trained on regular product IDs and a variant trained on semantic IDs, then compare their performance.

SASRec Baseline follows a standard architecture. It treats each product as a separate atomic unit and learns embeddings from scratch. This is based purely on behavioral patterns. The model uses 2 causal self-attention blocks, a 64-dimensional hidden state, and is trained on a discriminative task that separates the next item in a sequence from randomly sampled negatives using binary cross-entropy (BCE) loss.

# SASRec baseline prediction function

def predict(self, input_ids: torch.Tensor, candidate_ids: torch.Tensor) -> torch.Tensor:

"""Predict scores for candidate products.

Args:

input_ids: Product sequences [batch_size, seq_length]

candidate_ids: Candidate products for scoring [batch_size, num_candidates]

Returns:

Scores for each candidate [batch_size, num_candidates]

"""

# Get sequence representations

hidden_states = self.forward(input_ids) # [B, T, H]

# Use only the last hidden state for prediction

final_hidden = hidden_states[:, -1, :] # [B, H]

# Get candidate embeddings

candidate_embs = self.item_emb(candidate_ids) # [B, C, H]

# Compute scores via dot product

scores = torch.bmm(candidate_embs, final_hidden.unsqueeze(-1)).squeeze(-1) # [B, C]

return scoresIn contrast, SASRec on Semantic IDs reformulates recommendation as a conditional generative task. Instead of scoring products, its goal is to generate a 4-part semantic ID of the next product, token by token. This requires a larger architecture with 4 transformer blocks and 384-dimensional hidden states. Unlike TIGER paper, which uses the T5 encoder-decoder, this variant of SASRec is decoder-only, making it a more direct and fair comparison to the SASRec baseline. Because we’re using semantic IDs, instead of embedding each of the 66k products, we only have 1,024 token-level embeddings, with 256 tokens per level in the semantic ID.

# SASRec on semantic IDs prediction function

def predict_next_item(self, input_ids: torch.Tensor, teacher_forcing: bool = True,

target_tokens: Optional[torch.Tensor] = None) -> Dict[str, torch.Tensor]:

"""Predict next-item semantic ID tokens sequentially.

Args:

input_ids: Token sequences [batch_size, seq_length * num_levels]

teacher_forcing: Use ground truth conditioning during training

target_tokens: Ground truth tokens for next item [batch_size, num_levels]

Returns:

Dictionary with logits for each level

"""

hidden_states = self.forward(input_ids) # [B, T*L, H]

# Last-position representation as context for prior items

last_hidden = hidden_states[:, -1, :] # [B, H]

predictions = {}

# Sequential generation: each level conditioned on previous levels

for level in range(self.num_levels):

if level == 0:

# Level 0: predict directly from sequence representation

context = last_hidden

else:

# Levels 1-3: condition on previous predicted/ground-truth tokens

if teacher_forcing and target_tokens is not None:

# Use ground truth from previous levels during training

prev_tokens = target_tokens[:, :level] # [B, level]

else:

# Use predicted tokens during inference

prev_tokens = self._sample_from_predictions(predictions, level)

prev_embeds = self.token_emb(prev_tokens) # [B, level, input_dim]

prev_embeds_projected = self.input_projection(prev_embeds) # [B, level, H]

prev_context = prev_embeds_projected.mean(dim=1) # [B, H]

# Combine with sequence context

combined = torch.cat([last_hidden, prev_context], dim=-1) # [B, 2*H]

context = self.context_combiners[level - 1](combined) # [B, H]

# Predict current level

logits = self.level_heads[level](context) # [B, codebook_size]

predictions[f"logits_l{level}"] = logits

return predictionsThis generative approach changes both training and evaluation. The objective is no longer a simple BCE loss, but the sum of cross-entropy losses across semantic ID levels, forcing the model to predict the full sequence correctly. Evaluation is also different: instead of a dot-product item score, each candidate score is a joint log-probability computed from per-token generation probabilities. To stabilize training, teacher forcing uses the previous ground-truth token to guide prediction at the next level.

For evaluation, I used a validation set with 500 negative samples per positive next product. The baseline SASRec outperformed the semantic-ID variant on standard ranking metrics. Still, semantic IDs offer one important benefit: cold-start generalization via shared token prefixes across similar products. The tradeoff is compute: this approach needs about 4x more predictions per item and higher training/inference cost.

| Model | Hit@10 | NDCG@10 | MRR | Mean Rank | Median Rank |

|---|---|---|---|---|---|

| SASRec Baseline | 0.2812 | 0.1535 | 0.1300 | 138.9 | 41.0 |

| SASRec on Semantic ID | 0.2020 | 0.1138 | 0.1007 | 179.7 | 79.0 |

Additional training of Qwen3-8B for semantic ID recommendation

Next, I train the language model to “speak” semantic IDs. The goal is to make Qwen3-8B bilingual in natural language and semantic-ID tokens.

First, we build a training dataset of 4.2 million conversational examples to teach the model semantic IDs and recommendations. The data covers several types of tasks, including mapping semantic IDs to their corresponding text descriptions (and vice versa), predicting the next product in a user’s funnel, understanding relationships between product categories, and multi-step reasoning. Each of these examples is formatted as conversations with a system prompt, a user instruction, and an assistant response.

Training runs in two phases. Phase 1 expands the vocabulary: 1,027 semantic-ID tokens are added (<|sid_start|>, <|sid_end|>, <|sid_0|> … <|sid_1023|>, <|rec|>), and the embedding matrices are resized. In this phase, only input/output embeddings are trainable (1.23B params, 15.3% of total) for 1,000 steps at a higher learning rate.

After semantic-ID embeddings are initialized, Phase 2 fine-tunes all 8.3B parameters. This stage uses a lower learning rate, 3% warmup, and 8-bit AdamW for memory efficiency. Training runs for three epochs on the full dataset. Custom callbacks track gradient norms, hierarchical recommendation accuracy, and fixed-set generation samples.

Communicating with the LLM-recommender hybrid

The retrained model can then be used to recommend products and speak using semantic IDs and natural language. Since semantic IDs are a native part of its vocabulary, the model generates recommendations by simply predicting the next ID in the sequence, token by token. For interactive sessions, the chat interface translates the raw model output (which contains semantic IDs) back into product names, creating a human-readable conversation.

User: “Halo 3 Limited Edition -Xbox 360”, “Lost Planet: Extreme Condition - Xbox 360”, “Lost Planet: Extreme Condition Collector’s Edition -Xbox 360”

<|rec|>The model takes the history of user interactions (a sequence of Xbox 360 sci-fi shooters) and predicts the next product.

<|sid_start|><|sid_64|><|sid_351|><|sid_733|><|sid_768|><|sid_end|>Assistant: “Mass Effect - Xbox 360”

Basic tests show the model can generate next-product recommendations from user history and adjust them with natural-language constraints, such as platform (“Xbox games like…”) or genre (“I like animal and cute games.”).

User: Xbox games similar to “The Legend of Zelda: Ocarina of Time 3D”:

<|rec|>The model takes a seed product (“The Legend of Zelda”) and a natural language constraint (“Xbox”) to direct recommendations to another platform.

<|sid_start|><|sid_64|><|sid_271|><|sid_576|><|sid_768|><|sid_end|>, <|sid_start|><|sid_64|><|sid_435|><|sid_615|><|sid_768|><|sid_end|>, <|sid_start|><|sid_167|><|sid_271|><|sid_578|><|sid_768|><|sid_end|>Assistant: “Fallout: New Vegas - Xbox 360 Ultimate Edition”, “Halo 4 - Xbox 360 (Standard Game)”, “Halo Reach - Legendary Edition”

The model can also explain its recommendations, describe the relationship between two products, and even suggest creative names and descriptions for sets of products.

User: I just finished “Dragon Quest Heroes II Explorer’s Edition - PlayStation 4”. Suggest another

<|rec|>and explain why:The model performs a hybrid task where it generates a relevant semantic ID and then provides an explanation for the recommendation.

<|sid_start|><|sid_139|><|sid_289|><|sid_534|><|sid_768|><|sid_end|> If you liked Dragon Quest Heroes II, you might like Nights of Azure because both are action RPGs for PlayStation 4 with a focus on combat system and character progression. Both games offer narrative a guided experience with a strong emphasis on combat mechanics, assuming the player's general interest in the genre.Assistant: “Nights of Azure - PlayStation 4”

If you liked Dragon Quest Heroes II, you might like Nights of Azure because both are action RPGs for PlayStation 4 with a focus on combat and character progression. Both games offer narrative-driven experiences with a strong emphasis on combat mechanics, suggesting the player’s general interest in the genre.

Even if it does not match the raw precision of a specialized recommender, this interface lets users discover catalog items in multi-turn dialogue, unifying search, recommendation, and chat.

All of this runs through the model directly, without external intent classifiers, routing layers, or retrieval wrappers.

[TURN 1] User: Looking for games similar to Mario Kart.

<|rec|>The model generates semantic IDs for three racing-game candidates.

<|sid_start|><|sid_131|><|sid_503|><|sid_738|><|sid_768|><|sid_end|>, <|sid_start|><|sid_131|><|sid_260|><|sid_577|><|sid_768|><|sid_end|>, <|sid_start|><|sid_145|><|sid_416|><|sid_671|><|sid_768|><|sid_end|>Assistant: “Need for Speed Most Wanted - Sony PSP”, “Need for Speed Hot Pursuit 2 - PlayStation 2”, “Burnout Legends - Nintendo DS”

[TURN 2] User: Is there something similar, but for Xbox?

The model uses conversation history and the new “Xbox” constraint to generate semantic IDs for relevant Xbox arcade racers.

<|sid_start|><|sid_183|><|sid_461|><|sid_517|><|sid_768|><|sid_end|>, <|sid_start|><|sid_183|><|sid_461|><|sid_562|><|sid_768|><|sid_end|>, <|sid_start|><|sid_183|><|sid_461|><|sid_616|><|sid_768|><|sid_end|>Assistant: “Need for Speed Carbon - Xbox 360”, “Project Gotham Racing 3 - Xbox 360”, “Forza Motorsport 4 - Xbox 360”

[TURN 3] User: Suggest a name and description for the set.

The model understands that the context has shifted from recommendation to the creative task of text generation.

Assistant: Xbox Racing Legends: Speed & Style Pack

Conclusion

Training a hybrid LLM-recommender on semantic IDs opens a practical design space. The model may underperform specialized recommenders on raw ranking metrics, but it gains controllability, explainability, and a unified UX for search, recommendation, and chat.

With more compute, I would test sentence-piece tokenization for semantic IDs, as suggested in prior work by DeepMind and YouTube, and run larger-scale experiments focused on cold-start behavior. I would also train on multi-million-item catalogs and broader task mixtures to measure scaling behavior more rigorously.

Links

- Hou, Yupeng, et al. “Bridging Language and Items for Retrieval and Recommendation”. arXiv:2403.03952, 2024.

- Amazon. “Amazon KDD Cup ‘23 - Multilingual Recommendation Challenge Dataset”. AIcrowd, 2023.

- Rajput, Shashank, et al. “Recommender Systems with Generative Retrieval”. arXiv:2305.05065, 2023.

- Singh, Anima, et al. “Better Generalization with Semantic IDs”. arXiv:2306.08121, 2024.

- Zeghidour, Neil, et al. “SoundStream: An End-to-End Neural Audio Codec”. arXiv:2107.03312, 2021.

- Lee, Doyup, et al. “Autoregressive Image Generation Using Residual Quantization”. arXiv:2203.01941, 2022.

- Kang, Wang-Cheng, and Julian McAuley. “Self-Attentive Sequential Recommendation”. arXiv:1808.09781, 2018.

- Vaswani, Ashish, et al. “Attention Is All You Need”. arXiv:1706.03762, 2017.

- Zhang, Yanzhao, et al. “Qwen3 Embedding: Advancing Text Embedding and Reranking”. arXiv:2506.05176, 2025.

- Yang, An, et al. “Qwen3 Technical Report”. arXiv:2505.09388, 2025.

- Comanici, Gheorghe, et al. “Gemini 2.5: Pushing the Frontier”. arXiv:2507.06261, 2025.

- Fifty, Chris, et al. “Efficient Training of Language Models with Gradient Quantization”. arXiv:2410.06424, 2025.