RAG Assistant for Catalog

MVP chat search with deployment automation, experiments, and quality monitoring

One-liner: In six weeks, we deployed RAG MVP for retail client, reduced empty searches by three times, and stayed within one GPU budget.

What the system does in simple terms

Problem: 30% of search queries in online store return empty results, RAG assistant for catalog of 9M products works slowly. Users leave without waiting for response in 1.6 seconds, and cloud GPT-4 license costs $45k per month.

Solution: smart chat search understands natural language questions and finds relevant products in 0.8 seconds. System combines semantic search by meaning, filtering by price and brand, and reranking results for maximum accuracy.

Savings: empty results decreased from 30% to 9%, bringing +240k sessions per month. CTR increased by 1.6 percentage points, GMV increased by 6.3%. Cost dropped from $2.2 to $1.2 per 1000 queries, payback in 70 days.

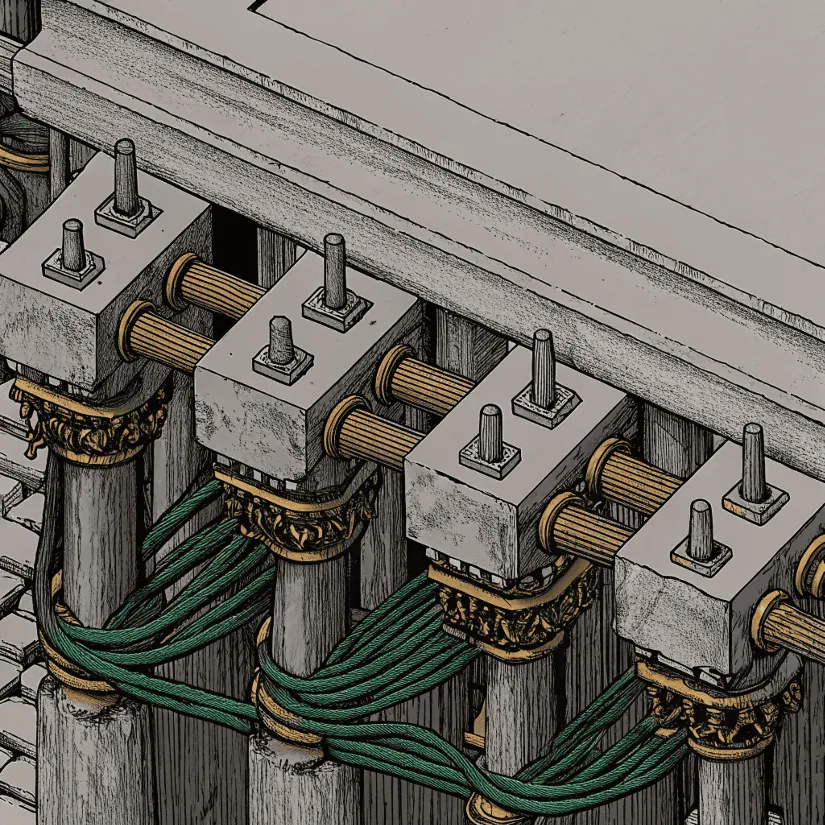

ML part: system uses machine learning to understand query meaning, vector search for fast finding of similar products, and Mistral-7B neural network for generating understandable answers. Model trained on 50k e-commerce dialogues and self-learns on user clicks.

How to use: 1) ask question in search 2) get relevant products in 0.8s 3) system learns from clicks.

This is an English placeholder. Full translation coming soon.

FAQ

What business problem did this RAG assistant solve first?

It targeted high zero-result search rates and slow response time in a large retail catalog, improving product discovery and reducing abandonment.

How was quality balanced against infrastructure cost?

The system used hybrid retrieval, model routing, and efficient serving so relevance improved while staying within a strict single-GPU operating envelope.

What made the MVP production-ready?

Automated deployment, monitored quality signals, and measurable release metrics were built in from day one, not added after launch.